Why Your GenAI Strategy Demands an All-Inclusive Data Modernization

Image Source: Bernard Marr & Co (For Illustrative purposes only)

A piecemeal approach won't work. You can't leave your business logic behind.

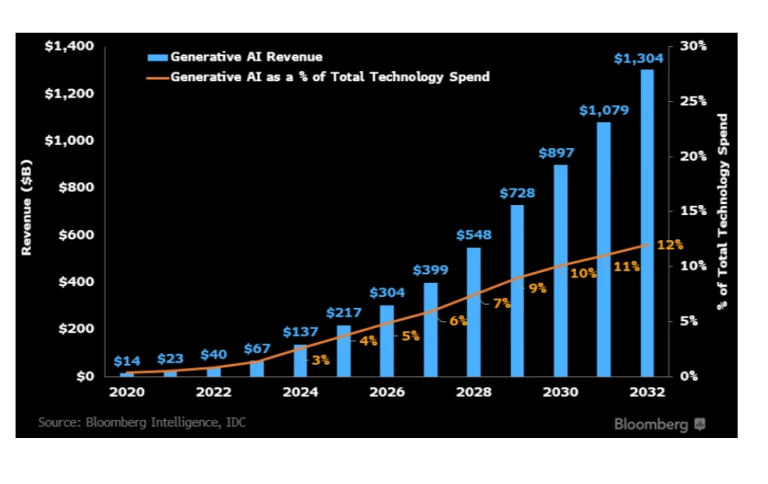

Every executive wants a Generative AI strategy. The mandate has come down: use AI. But as engineering and data leaders are quickly discovering, there’s a massive, unglamorous, and expensive prerequisite that the hype train conveniently skips: your data infrastructure is not ready.

That monolithic enterprise data warehouse (EDW) you’ve spent 20 years meticulously building is the very thing holding your AI strategy hostage.

Image Source: Bloomberg

Image Source: Bloomberg

The harsh reality is that most legacy data platforms are technically incompatible with the demands of modern AI/ML workloads. The business case for migrating to a modern data lakehouse is no longer just about "cloud transformation"; it has become a non-negotiable prerequisite for survival in the age of AI.

But even that realization leads to the biggest pitfall of all: the piecemeal migration.

The AI vs. Legacy Data Warehouse "Brick Wall"

Your legacy EDW (think Teradata, Netezza, or on-prem SQL Server) was a marvel of engineering… for 2005. It was designed to answer structured questions about the past: "What were our sales in the last quarter?"

Generative AI is designed to make predictive, creative, and complex judgments about the future based on all available information. This is a fundamentally different job, and your old system is technically incapable of performing it.

Here’s why:

- Fueling GenAI with Unstructured GenAI models are fluent in human language, images, and audio. Your EDW is fluent in rows and columns. It was never designed to store, process, or query the massive volumes of unstructured and semi-structured data (such as documents, call logs, and support tickets) that AI thrives on.

- The Language of AI (Vector Embeddings): To "understand" data, AI models use vector embeddings, complex mathematical representations of concepts. Searching for "similar" concepts (i.e., the core of RAG, Retrieval-Augmented Generation) requires a vector database. This is simply alien technology to a traditional EDW.

- Real-Time Processing vs. Batch Reporting: Your EDW probably updates overnight in a batch window. An AI application needs to process information and provide an answer now. Modern data platforms are designed for real-time streaming and low-latency queries that AI requires.

So, the solution is easy, right? Just migrate to a modern platform. Except, it's not.

The "Missing Scope" Fallacy: Why Piecemeal Migration Fails

The most common and catastrophic mistake organizations make is treating modernization as a simple "data dump." They assume they can just "lift and shift" their database tables to a new platform, flip a switch, and call it a day.

This approach fails 100% of the time.

Why? Because the data itself is only half the story. The real value, the "brain" of your enterprise, isn't in the tables. It’s embedded in the intricate web of business logic and orchestration that surrounds them.

\

Image Source: Deng Xiang on Unsplash

We're talking about decades of accumulated knowledge, embedded in:

- Complex ETL (Extract, Transform, Load) jobs

- Thousands of stored procedures

- Data validation rules

- Complex orchestration scripts

- Access controls and lineage

This logic dictates how raw data is transformed into reliable information. It’s the formula for calculating customer lifetime value, the rules for flagging a fraudulent transaction, and the process for closing the books at the end of the quarter.

When you take a piecemeal approach, "Let's just move the Sales data first", you break this logic. You sever the dependencies. You lose your single source of truth and end up with two broken systems instead of one working one. Business continuity grinds to a halt.

The "All-at-Once" Imperative: Migrating Data and Logic

The only viable path forward is an all-inclusive approach. You must move the entire system, not just the data. This means capturing and migrating all the data, metadata, and business logic that give it context.

When you successfully replicate the entire system, including the complex orchestration, in the new environment, you achieve three critical things:

- You Ensure Consistency: Your reports still tie out, your numbers are still correct. You maintain a single source of truth.

- You Maintain Business Continuity: The business doesn't stop. The rules that run your company are preserved and functional.

- You Contextualize Your Data for AI: This is the most important part. By migrating the business logic, you give your new AI models the "owner's manual" for your data. It can now understand how you define a "good customer" or a "risky asset" and build its intelligence on top of that proven logic, rather than trying to re-guess 20 years of institutional knowledge.

The Evolving Landscape of Data Modernization

This "all-at-once" migration is, of course, incredibly complex. For decades, the only option was a brute-force manual effort, hiring armies of consultants from firms to spend years (and millions) rewriting code by hand. This approach is slow, expensive, and notoriously prone to errors.

\  Image Source: Binmile

Image Source: Binmile

The market has responded with tools to accelerate the process. Integration platforms helped connect disparate systems. At the same time, specialized code-conversion tools from companies emerged to automate parts of the problem, such as translating stored procedures.

While these solutions advanced the industry, a persistent challenge remains: achieving true, end-to-end automation of the entire system with high fidelity. Managing fragmented tools still requires a massive integration effort, and manual-heavy services are too slow to keep pace with the rapid advancements of AI.

This gap is where new platforms are focusing their efforts. A newer approach, seen in platforms tackling this by using an AI-driven engine to regenerate the entire target system. The goal is to move beyond fragmented point solutions by automating not just metadata and data, but also complex ETL jobs and workflows. This high-accuracy, end-to-end automation enables the migration of the entire business logic, ensuring system consistency and providing the crucial, contextual foundation for modern AI workloads.

Your AI Strategy Is a Data Strategy

Don't let your GenAI ambitions outpace your infrastructure reality. Before you spend a dollar on a new AI model, you must first look at the crumbling foundation it will be built on.

An all-inclusive, logic-first data modernization isn't a detour from your AI strategy; it is and must be Step One.

-

:::info Rudrendu Paul, Debjani Dhar, and Ted Ghose co-authored this article.

:::

\

You May Also Like

Ethereum unveils roadmap focusing on scaling, interoperability, and security at Japan Dev Conference

Trading time: Tonight, the US GDP and the upcoming non-farm data will become the market focus. Institutions are bullish on BTC to $120,000 in the second quarter.