The First Generation That Will Never Fully Own Its Thoughts

There has always been influence on human thinking: teachers, books, culture, and peers. But influence used to arrive after thought had already begun. For the first time in history, a generation is growing up with a system that participates before thoughts fully form. This is not about outsourcing thinking. It is about co-authoring it.

Modern AI does not wait for us to decide what we think and then respond. It completes sentences we haven’t finished, suggests directions we haven’t chosen, and reframes questions before we have committed to asking them. It sits in the pre-conscious layer of cognition where ideas are still fluid, half-shaped, and deeply personal.

That subtle shift changes everything.

Thinking Has Moved From Solitary to Shared

Previous technologies accelerated thinking. AI occupies it.

Search engines responded to intent. Calculators executed commands. Even social media amplified expression after the fact. But large language models operate in a different regime. They intervene at the moment of uncertainty – when we pause, hesitate, or feel incomplete.

That pause used to belong to us. Now it belongs to an autocomplete.

When an AI suggests a better way to phrase your thought, it does not replace the thought; it reroutes it. The difference is invisible but profound. Over time, our internal voice begins to harmonize with the machine’s defaults: clarity over ambiguity, coherence over exploration, plausibility over originality.

The result is not dependency. It is cognitive co-tenancy.

AI lives inside the thinking process, the way infrastructure lives inside a city. Though rarely noticed, it is constantly shaping movement.

The Illusion of Independent Thought

Most people believe they are thinking independently because they feel autonomous. But autonomy today is increasingly retrospective, not generative.

We decide after the suggestions appear. We choose between the options already proposed. We refine instead of originating. The space of possible thoughts narrows before we even become aware of it.

This creates a dangerous illusion: “I chose this, therefore it is mine.”

But authorship is not the same as selection.

When AI consistently presents certain framings, metaphors, or solution patterns, it quietly defines the boundaries of “reasonable” thought. Over time, these boundaries harden not because they are enforced, but because they are convenient. The most powerful influence is the one that feels helpful.

Decision Ownership Is Quietly Eroding

There is another, more subtle shift happening beneath productivity gains: accountability drift.

When AI-assisted decisions succeed, we internalize credit. When they fail, we externalize blame. This asymmetry erodes responsibility without us noticing. Decisions become collaborative, but accountability remains individual. Over time, this weakens judgment, not strengthens it.

Judgment requires ownership. And ownership requires friction.

Why This Generation Is Different

Every generation has tools. This one has thought partners.

Children are learning to write with AI before they learn to struggle with blank pages. Students are learning to reason with systems that always respond confidently, even when wrong. Professionals are learning to think in dialogue with entities that never doubt themselves.

This does not make them less intelligent. It makes them differently shaped.

The risk is not that people will stop thinking. The risk is that they will stop experiencing thinking as something that emerges from silence, effort, and uncertainty. When uncertainty disappears, so does originality.

The Coming Premium: Intentional Solitude

If this trajectory continues unchecked, the most valuable cognitive skill will not be intelligence, speed, or even creativity. It will be intentional solitude. The ability to:

- Sit with unfinished thoughts

- Tolerate confusion

- Delay resolution

- Think without feedback

Silence is becoming scarce. And scarcity creates value.

In the same way that physical fitness became valuable in sedentary societies, cognitive independence will become valuable in AI-saturated ones.

Practical Solutions: How to Reclaim Thought Ownership

This is not a call to reject AI. That would be unrealistic. The solution is cognitive design, not abstinence.

1. Separate Thinking From Refining

Use AI only after you have produced a rough, imperfect version of your thought. Think on paper first. Write badly on purpose. Let AI refine, not originate.

This preserves authorship while retaining leverage.

2. Schedule “Unassisted Cognition”

Create explicit AI-free thinking windows. Journaling without prompts. Whiteboard sessions without tools. Walks where ideas are not immediately externalized.

Treat these sessions as mental training, not inefficiency.

3. Ask AI to Challenge, Not Complete

Instead of asking the AI to “write it better”, ask it about the assumptions you are making, disagreements that would come from an intelligent critic, or the weak parts of an idea.

This shifts AI from co-author to adversary, preserving your cognitive edge.

4. Build a “Thought Provenance” Habit

Regularly ask yourself:

- Why do I believe this?

- Where did this framing come from?

- Did this insight emerge, or was it suggested?

You don’t need perfect answers. The act of asking is enough.

5. Design Friction Back Into Your Process

Speed is seductive. Friction is protective. Delay publishing. Delay sending. Delay finalizing.

Give your thoughts time to mutate without assistance.

A New Definition of Intelligence

In the AI era, intelligence will no longer be defined by how much you know, how fast you produce, or how fluent you sound.

It will be defined by:

- How well do you think when no one is helping

- How clearly you know what you believe

- How deliberately you choose when to invite assistance

The goal is not to think alone forever. The goal is to remember how.

Final Thought

This generation will not fully own its thoughts, and that may be inevitable. But ownership does not have to be absolute to be meaningful.

If we learn to intentionally reclaim authorship, even part of the time, we can preserve what makes thinking human: struggle, surprise, and self-recognition.

The future does not belong to those who think fastest with AI. It belongs to those who know when not to use it.

You May Also Like

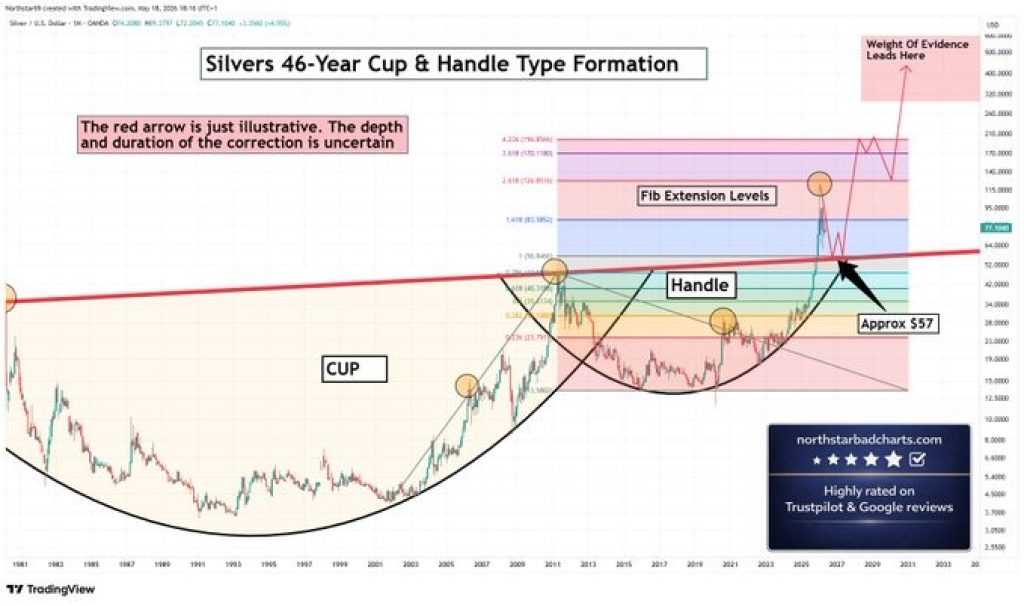

Silver Price Prediction: Cup and Handle Points to $196 – Why the Correction Was Always Part of the Plan

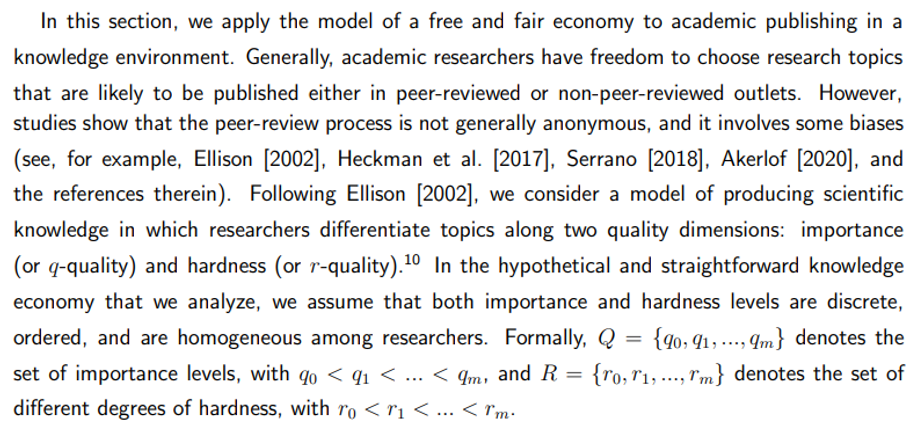

Academic Publishing and Fairness: A Game-Theoretic Model of Peer-Review Bias