Advertising’s AI moment: why trust is now the hardest thing to automate

AI has slipped into advertising quietly and then all at once. Tasks like planning, targeting, optimisation, measurement can now happen very quickly. For many teams, that speed has become the headline. Faster decisions, cleaner workflows, better returns.

What receives less attention is what this acceleration does to trust.

When AI begins to shape where brands appear, what content is rewarded, and which voices are amplified, it stops being a back-office tool and becomes part of culture. And culture matters.

Brands are discovering that efficiency alone does not protect reputation, creativity, or credibility. In some cases, it does the opposite.

But here’s the thing: when AI is designed to understand how people think, feel, and engage with content, it can begin to restore something advertising has been losing: relevance that feels human rather than imposed.

The industry is now facing a familiar tension in a new form – where automation strengthens advertising, and where it begins to weaken it.

Context replaces control

For years, digital advertising tried to manage risk through restriction. Tools like keyword block lists provided hard exclusions and avoidance by default. These approaches promised safety, but they also flattened nuance and diversity. News coverage was treated as danger, social issues were flagged as toxic, and minority perspectives were quietly filtered out. AI has begun to change that dynamic, although only when used carefully.

More advanced contextual systems, grounded in AI and neuroscience, can now interpret real-time signals of interest, emotion, and intent rather than surface signals alone. Neuroscience frameworks help explain why certain contexts make people more attentive, more engaged, and more open, and enable brands to deliver ads in moments when individuals are cognitively and emotionally receptive, not just broadly relevant. As such, rather than simply recognising what someone is reading, these systems interpret why they’re there and what they’re likely feeling. When advertising aligns with those moments and insights, it becomes part of the experience rather than an interruption. This shift can also transform how brand safety is defined. Safety is now about appearing in the right places, with intention, without muting the complex world around us. Contextual understanding has become an ethical decision as much as a performance one, shaping which stories are funded and which voices remain visible.

There is also a wider implication here. When brands pull away from credible reporting environments in the name of risk avoidance, those spaces do not become quieter, but weaker. Advertising money signals value. When brands withdraw from trusted journalism, misinformation does not disappear, but fills the space.

Automation sharpens process but dulls judgement

AI excels at pattern recognition. It can spot correlations at scale and surface insights humans would miss. Used well, this is powerful. It removes friction, gives teams time back, and lets people focus on decisions rather than spreadsheets.

Problems arise however when rudimentary automation is allowed to carelessly decide what good looks like. Campaigns start to resemble each other and creative becomes efficient rather than distinctive. Language smooths out. Risk tolerance narrows. Work performs, but it rarely surprises. Many brands recognise this feeling. Nothing is technically wrong, yet nothing feels memorable either.

Advertising depends on human tension. Humour that divides opinion, cultural references that date quickly and emotional cues that are difficult to capture through demographics or keywords alone. This is where AI grounded in neuroscience can play a different role. By analysing how people emotionally respond to content environments, it can help predict receptiveness and emotional alignment, while leaving meaning, storytelling, and creative intent firmly in human hands.

There is also a quieter concern inside agencies and brands. As agentic systems take over planning and buying, early career roles shrink. Those roles are where people learn how media actually behaves; they are where instinct forms. Remove that layer and future leaders inherit systems they know how to operate but not how to question.

Judgement cannot be automated if it is never taught.

Bias does not disappear when it becomes invisible

AI systems are trained on history. History carries bias. When models learn from past media patterns, they absorb the same blind spots the industry has been trying to fix for decades. This creates a subtle risk, where outputs can quietly reinforce what has always been overrepresented while sidelining voices that were already underfunded or misunderstood.

Addressing this requires scrutiny around who trained the system, what content it learned from, and which outcomes are rewarded. Bias becomes an organisational fault as well as a technical one. This is why how AI is built matters as much as how it is used. Systems developed in-house, trained intentionally, and reviewed continuously offer greater control over alignment, representation, and bias mitigation. Internal review processes that actively test outputs help ensure that automation does not quietly reproduce the past.

Regulation is beginning to step in. The EU AI Act signals a shift toward clearer accountability. Rules help, but they are not enough on their own. Trust is not repaired by any single platform or policy. It depends on how advertisers, publishers, and technology providers behave together. Real responsibility still sits with the people deploying these tools every day.

Training is where intent becomes real

Every serious conversation about responsible AI eventually arrives at the same place: education. Not one-off sessions or tool demos, but ongoing literacy that teaches teams how to challenge outputs, spot distortion, and intervene when automation drifts too far from reality.

Practical training looks different depending on role. For creative teams, it means understanding where AI supports exploration and where it homogenises ideas. For planners, it means knowing when optimisation sacrifices context. For leaders, it means recognising that short-term efficiency gains can create long-term cultural costs.

The most effective programmes treat AI as something to be interrogated, not obeyed. Teams test systems on real work. They review unintended tone. They discuss representation. They document what breaks.

This approach slows things down slightly. That is the point. Trust rarely forms at machine speed.

Trust is built through placement, not promises

Audiences are paying attention to where brands show up; alongside what content, in what tone, and in whose voices.

This scrutiny is shaped by misinformation cycles, synthetic media, and declining confidence in institutions. Brands operate inside that environment whether they acknowledge it or not.

Supporting credible journalism matters more than ever, as a practical decision about where budgets land. Quality environments provide context, accountability, and standards that social virality cannot guarantee.

Trust is rebuilt through consistent behaviour, transparent systems, measured restraint, and clear choices about the kind of media ecosystem brands want to sustain. Not just statements about responsibility.

AI can help advertising become smarter. When grounded in an understanding of human attention, emotion, and intent, it can also help make advertising feel more relevant, more respectful, and more human. Left unchecked, it risks doing the opposite.

The industry is not being asked to slow innovation. It is being asked to steer it; to combine speed with judgement, automation with empathy, and performance with principle.

That balance will define whether AI becomes a creative partner or an invisible risk. The technology is already here. What matters now is how deliberately it is used, and what the industry decides is worth protecting as everything else accelerates.

You May Also Like

Robotics Automation Prototyping: Engineering Kinetic Agility into End-Effectors

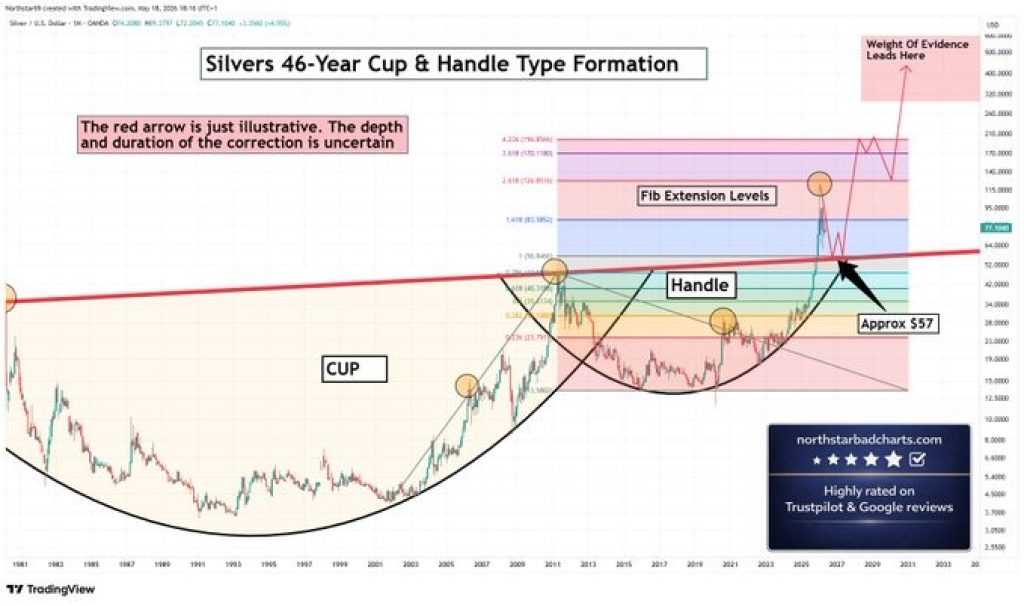

Silver Price Prediction: Cup and Handle Points to $196 – Why the Correction Was Always Part of the Plan