From Test Coverage to Business Confidence

Introduction

Most software teams measure quality using something called test coverage, a percentage that shows how much of the codebase was touched during testing. The higher the number, the safer the software feels.

For years, this was the accepted standard. Hit 80%, hit 90%, and the team felt confident shipping. Coverage became a proxy for quality itself.

But coverage only tells you one thing: which parts of the code ran during the test. Think of it like a fire drill where you check every room on a floor plan. You can confirm the rooms exist, but you can’t confirm the building is actually fireproof.

Coverage answers a narrow question:

“Did we execute this code during testing?”

The business, however, is asking something very different:

“Can customers trust this in production?”

Those two questions live in completely different worlds. And when teams confuse them, it creates a dangerous false sense of security.

The False Comfort of High Coverage

Here’s what coverage does not measure: whether your system actually works under the conditions real users create. A piece of code can pass every test beautifully in a quiet, controlled test environment, and still collapse the moment real traffic hits it.

Modern AI tools have made this problem worse. They can generate tests automatically and push your coverage from 60% to 95% in hours. The reports turn green. Dashboards look pristine. Managers feel reassured. But all that happened was that more lines of code were touched, not that the system was proven reliable.

Coverage doesn’t tell you if your checkout page holds up when ten thousand people hit it at once. It doesn’t tell you what happens when a payment provider slows down, or when one part of your system quietly takes down everything connected to it. Three real scenarios show exactly how this plays out:

Checkout Under Concurrency

An e-commerce company runs a flash sale. Their checkout system has 95% test coverage, every rule tested, every calculation verified, every path confirmed. The tests all pass. Then the sale goes live, and hundreds of thousands of users hit “Buy” at the same second.

● The database, handling requests it was never stress-tested for, starts slowing down

● The inventory system, overwhelmed, times out

● The checkout retries the payment automatically, and charges some customers twice

● Orders are complete without confirmation emails reaching customers

The aftermath:

● Customers are furious

● The support queue floods with complaints

● Revenue is refunded, and reputation takes a hit

The tests confirmed the logic was sound. What they never tested was what happens when everyone shows up at once, a scenario called concurrency, where many users interact with the same system simultaneously.

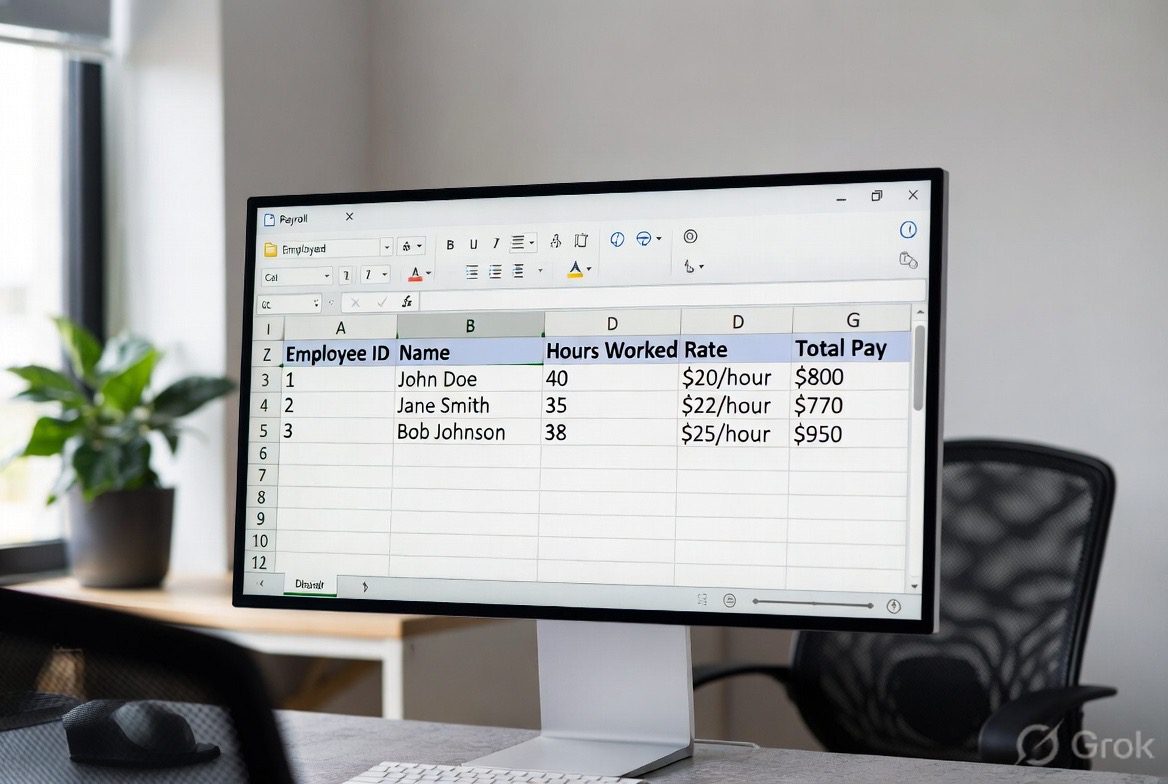

Payroll Under Concurrent Batch Execution

A payroll software company processes salaries for thousands of companies. Their system has 100% branch coverage, every salary formula tested, every tax rule verified, and every edge case handled. On the first of the month, payroll runs in multiple countries simultaneously.

● The system’s servers, running several jobs at once, start competing for the same database resources

● Some jobs timeout mid-process

● Others retry and run the payroll twice

The result:

● Hundreds of employees are paid twice

● Hundreds more receive nothing

● Compliance reports flag inconsistencies

Every formula was correct. Every test passed. But no test ever simulated what happens when multiple jobs run at the same time and compete for the same resources, a condition called concurrency conflict. Coverage confirmed the math. It said nothing about the real-world environment the math had to survive in.

Payment Integration Under Network Instability

A fintech startup integrates a third-party payment gateway. Every test passes. The integration works perfectly in their test environment, where the internet is fast, stable, and predictable.

Production is different:

● Some payment requests drop mid-air because of unstable connections

● Others slow to a crawl and time out before a response arrives

● The payment provider starts throttling requests once traffic spikes

● The system retries failed payments and charges customers twice

● Some transactions freeze in a “pending” state for days

● Disputes pile up, and the company’s merchant account gets flagged

Every test had passed. But the test environment never simulated a slow network, a rate-limited API, or a third-party service under stress. Coverage confirmed the integration worked in isolation. It had no way to confirm it would survive the real internet.

This is the gap that costs businesses real money. And it’s the gap that coverage, by itself, cannot close.

Shipping Based on Risk, Not Pass Rates

Strong engineering teams don’t ask “Did everything pass?” before shipping. They ask a harder question: “Do we understand what could go wrong, and are we prepared for it?”

Before any release, they want honest answers to questions like:

● If this part of the system breaks, how much of the product breaks with it?

● Is the new feature wrapped in a feature flag, a simple on/off switch that lets us disable it instantly if something goes wrong, without touching the rest of the system?

● Can we see what’s happening inside the live system right now, or will we only find out something broke when customers start complaining?

● If we need to undo this release, can we do it in under ten minutes without breaking something else?

These are not things a test report can tell you. They are risk controls, and they’re what actually make a release decision defensible.

Teams that ship with genuine confidence build that confidence in layers. They look at how well the system is instrumented, meaning whether it has enough internal visibility to diagnose problems quickly.

They check that alerts are precise enough to catch real issues without constant false alarms. They define what “acceptable performance” means before going live, and they rehearse their rollback plan rather than just writing it down.

That picture typically includes:

● Observability: the ability to see inside a running system, like gauges on a car dashboard showing temperature, pressure, and speed

● Alerts that fire when something is actually wrong, not for every minor blip

● Agreed performance baselines, so everyone knows what “slow” means before users start noticing

● Error budgets: a pre-agreed tolerance for how much can go wrong before it becomes a real problem

● A tested rollback plan, not just a document nobody has tried

Test results are one input into this picture.

They are not the whole picture.

Quality at Scale Is About Containment

As a software product grows, something important changes. Early on, you can know every part of it. You can test every path. But once the system spans many servers, integrates with dozens of third-party services, and serves millions of users across different regions, you can no longer test your way to certainty. At that scale, some failures become inevitable.

The goal shifts. Quality at scale is no longer about preventing every failure.

It’s about making sure that when one part fails, the rest of the system keeps running, and that the damage is contained before customers even notice.

Think of it like compartments in a ship. If one compartment floods, the watertight doors close automatically. The ship keeps sailing.

The same principle applies to well-designed software:

● A problem in the notifications service shouldn’t break checkout

● If the recommendation engine goes down, the homepage still loads, just without recommendations

● The system detects the issue and restores itself in seconds, not hours

● Users might see a slightly slower page at worst, not an error message

This shift mirrors modern reliability thinking. Instead of assuming everything will work, you assume something will eventually break, and you architect the system to limit the damage when it does. The question is not

Teams build genuine confidence not by believing nothing will break, but by knowing exactly what they’ll do when it does.

Performance Is Business Logic

Speed is not a technical nicety. It is a business variable.

Google’s research on web performance found that even a half-second delay in page load time can reduce conversions meaningfully. A one-second slowdown on a mobile site can cut conversions by up to 20%. Users don’t consciously decide “this is too slow.” They just feel friction, lose confidence, and leave, often before they can explain why.

The effect is not imagined. It is measurable, consistent, and directly tied to revenue.

Test coverage says nothing about any of this. You can have 100% coverage and a checkout page that takes four seconds to load, and be losing customers every hour without knowing it. This is why monitoring and observability matter so much. They give teams a live window into how real users are actually experiencing the product:

● Latency distributions, not just average response time, but the full range, including the slow tail that affects real users

● Error rates by region, so a problem in one geography doesn’t hide behind a global average

● How saturated the system is under peak load, are we at 60% capacity or 98%?

● Real user behavior data, not just synthetic tests run from a data center

● Customer signals, complaints, drop-offs, and support tickets that reveal what dashboards miss

When engineering teams can connect their technical metrics to real user outcomes, quality stops being an internal score and becomes a business conversation.

From Pass Rates to Confidence Levels

A pass rate is binary: either the tests passed, or they didn’t. That’s a yes or no answer to a very limited question.

Confidence is something different. It’s built through layers of evidence, and each layer answers a question that the test suite alone cannot.

Instead of walking into a release meeting and saying “All tests passed,” teams with genuine readiness can say:

● We ran the most critical user journeys under twice the expected traffic, and they held

● We’ve tested our rollback; if this release needs to be reversed, we can do it in four minutes without data loss

● Every new feature has monitoring in place before users touch it

● No part of the product is slower than it was in the previous release

● We’re releasing to 5% of users first and watching closely before expanding

That is a fundamentally different standard than “the tests passed.” Its quality is expressed in terms that business leaders can actually evaluate.

Each of these layers contributes something the others can’t:

- Automated tests check that the code behaves correctly under normal conditions.

- Load testing checks that it holds up under pressure, think of it as a stress test before the real race.

- Observability gives teams live visibility into a running system so they can catch problems early.

- Feature flags act as safety switches; they let teams release to a small group first and expand carefully, or turn them off immediately if something goes wrong.

- Customer metrics close the loop by showing whether users are getting what they actually came for.

Together, these layers produce something a test report alone cannot: operational assurance — the confidence to ship knowing you can see what’s happening, recover quickly if needed, and protect the people using the product.

Why Coverage Alone Cannot Create Trust

Customers don’t know what test coverage is. They don’t care.

What they experience is whether the product does what they came to do, whether their payment went through, whether the page loaded before they gave up, and whether the order arrived. Their trust is built or broken entirely by that experience.

A product with 70% test coverage but strong monitoring, fast rollback, and careful performance management can be far more reliable in practice than a product sitting at 99% coverage with no visibility into how it behaves once it’s live. Coverage is a measure of testing activity. What customers experience is the outcome of much more than that.

Coverage is a testing metric. Confidence is an operational state, the result of monitoring, rehearsed recovery plans, and understood risk. Trust is the business outcome that customers extend when those things are working. They are related, but they are not the same thing.

Treating them as the same thing is how green dashboards end up masking broken user experiences.

How QA Builds Business Confidence Beyond Test Coverage

QA, quality assurance, is often thought of as the team that runs tests and reports numbers. But its real job is answering a harder question:

Will this system hold up for real customers, under real conditions, at the moment it matters most?

Answering that question goes well beyond running test suites and checking percentages. It means probing the system the way production will:

● Were the most important user flows tested under realistic traffic, not just light test conditions?

● Did we simulate what happens when many users hit the same resource at the same time?

● What happens when an external service your product depends on slows down or stops responding? Did you test that scenario?

● Is the monitoring in place before users ever touch the feature, not bolted on afterward when something breaks?

● Has the team actually practiced the rollback procedure, not just written it in a document?

Research published in Accelerate found that the highest-performing engineering teams treat quality not as a final gate but as an ongoing signal of stability and delivery health. Site Reliability Engineering offers the same principle from an operational angle: build systems that contain failure gracefully, not systems designed around the assumption that failure will never happen.

Test coverage tells you which parts of your code ran. That’s useful. But it doesn’t tell you whether users can trust it, whether it holds under pressure, or whether the team can recover quickly when something breaks.

Conclusion

Shifting from a coverage-first mindset to a confidence-first mindset is not about abandoning testing. It’s about understanding what testing can and cannot tell you, and building the other layers that cover the rest.

Real quality is defined by what happens in production, how the system behaves under load, how quickly it recovers from failure, how clearly it surfaces problems, and how well understood the risks are before anything ships.

Automated testing is one essential layer of that. So is load testing, observability, performance engineering, controlled rollouts, and clear risk ownership. None of them replaces the others.

When teams can walk into a release conversation and speak in terms of resilience, risk, and readiness, not just pass rates, quality stops being a QA statistic and becomes something the whole business can act on.

That’s the moment engineering maturity and business reality finally speak the same language.

References & Further Reading

● Google — Why Speed Matters

https://web.dev/why-speed-matters

● Google Site Reliability Engineering — Monitoring Distributed Systems

https://sre.google/sre-book/monitoring-distributed-systems

● Forsgren, N., Humble, J., & Kim, G. — Accelerate: The Science of Lean Software and DevOps

https://itrevolution.com/product/accelerate

● Google Cloud — DORA Metrics

https://cloud.google.com/devops

About the author

Adewale Adekomaiya is a Senior QA Architect who designs intelligent automation and performance ecosystems across web and mobile platforms.

He works at the intersection of AI, engineering velocity, and system resilience, helping teams ship faster without compromising reliability. His philosophy: quality must be measurable, visible, and tied directly to business impact.

https://www.linkedin.com/in/adewaleadek/

Similar read: Meet Esther Okafor, the QA engineer fixing software bugs on a global scale one company at a time

The post From Test Coverage to Business Confidence first appeared on Technext.

You May Also Like

Why Choose Sunriseaccountants.net for Professional Payroll Management

Bitcoin World Reveals Top 5 Stunning Gainers And Losers