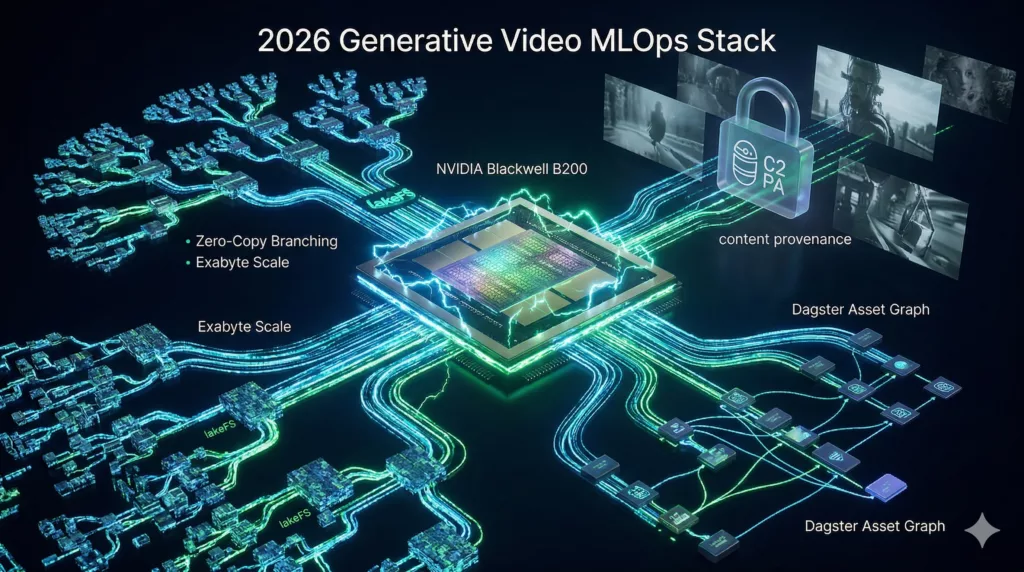

The 2026 MLOps Stack: Essential Tools for Image & Video Generation

Is your video production pipeline still running on 2024 experiments, or is it ready for the “Rubin era” of 2026?

Generative video has moved from a research novelty to a foundational part of enterprise IT. This shift requires a technical stack that handles the massive spatial-temporal data of 4K video. Success now depends on specialized MLOps that can monitor “creative” nuances and manage petabytes of data without doubling your storage costs.

Read on to learn how the latest hardware and “zero-copy” data strategies are defining the 2026 video production standard.

Key Takeaways:

- Compute power is led by NVIDIA’s B200 (Blackwell), offering 192GB HBM3e and up to 15x the inference speed of H100, while the L40S is 35% cheaper per-token.

- Data versioning at petabyte scale requires lakeFS, which uses zero-copy branching to create isolated data branches in milliseconds, unlike traditional Git LFS.

- The orchestration stack is defined by Dagster’s asset-centric workflows and NVIDIA Dynamo Triton’s dynamic batching for high-throughput serving.

- Traceability is mandatory; C2PA signing is required by August 2, 2026, and observability shifts to monitoring latent space for creative drift.

How Does the Silicon Translate to Ultra-Low Latency Serving?

The silicon landscape in 2026 is dominated by NVIDIA’s Blackwell architecture, which has redefined performance-to-power ratios. While the H100 (Hopper) remains the industry’s reliable workhorse, the B200 is now the standard for frontier-scale video training and high-throughput serving, delivering up to 15x the inference performance of previous generations.

GPU Benchmark: B200, H100, and L40S

The B200’s primary advantage in generative video lies in its 192GB of HBM3e memory and 8 TB/s bandwidth. This allows massive diffusion models to reside entirely on-chip, eliminating the latency penalties of model sharding.

| Feature | NVIDIA B200 | NVIDIA H100 (SXM) | NVIDIA L40S |

| Architecture | Blackwell | Hopper | Ada Lovelace |

| VRAM | 192 GB HBM3e | 80 GB HBM3 | 48 GB GDDR6 |

| Memory Bandwidth | 8,000 GB/s | 3,350 GB/s | 864 GB/s |

| TDP (Power) | 1000W (700W-1000W) | 700W | 350W |

| Primary Use Case | Frontier Training / LLM | Production Workhorse | Visual Inference / RAG |

| Inference Speed | 15.0x (vs H100) | 1.0x (Baseline) | 0.4x – 0.6x |

- The B200 Advantage: Approximately 57% faster training than the H100, largely due to its massive memory allowing for doubled batch sizes (e.g., from 2048 to 4096).

- The L40S Sweet Spot: Despite lower raw speeds, the L40S offers a 35% lower cost-per-token rate than high-end units, making it the ROI-optimal choice for fine-tuning and visual inference where horizontal scaling is preferred.

High-Throughput Serving with NVIDIA Dynamo

As of March 2025, NVIDIA consolidated its serving stack under the NVIDIA Dynamo Platform. The successor to Triton, NVIDIA Dynamo-Triton, is the industry standard for managing the complex orchestration of agentic and video-generation requests.

Key Innovations in Dynamo-Triton:

- Disaggregated Serving: Dynamo separates the “Prefill” (context processing) and “Decode” (token/frame generation) phases onto different GPUs, allowing each to be optimized independently.

- Dynamic Batching: Triton waits for a configurable max_queue_delay_microseconds to group independent requests into a single GPU batch, maximizing saturation without blowing the latency budget.

- TensorRT Ensembles: In 2026, a standard SDXL or video pipeline is served as an ensemble. The Text Encoder, UNet, and VAE are separate but interlinked optimized entities, achieving a 2.6x speedup over native PyTorch.

Hardware Selection Strategy

- Choose B200: For frontier model training ($>200B$ parameters) or serving ultra-long context windows that require 192GB to avoid OOM errors.

- Choose H100/H200: For proven production stability and workloads using models up to 70B parameters.

- Choose L40S: For visual AI applications and RAG where power efficiency and cost-per-inference are the priority.

Can Your Data Infrastructure Keep Pace with Your B200 Cluster?

In 2026, video datasets often reach petabyte scale, creating a “data tsunami” that breaks traditional version control like Git LFS. The industry has standardized around lakeFS, an open-source system that provides Git-like semantics (branch, commit, merge) directly over object storage like AWS S3 or Azure Blob.

Zero-Copy Branching: Instant Experimentation

The core innovation of lakeFS is its Zero-Copy Branching. When a researcher wants to test a new video subset—such as removing clips with high motion blur—they create a new branch.

- Metadata-Only Operations: Creating a branch takes milliseconds regardless of dataset size because no video files are actually copied.

- Isolation: GPUs can immediately begin training on the new branch in total isolation, avoiding the costs and delays of data duplication.

- Graveler Engine: Under the hood, the Graveler storage engine maps logical paths to physical S3/Azure objects using a versioned K/V store. Commits are stored as RocksDB-compatible SSTables, making merges and diffs linear to the change size rather than the total dataset size.

Multi-Stage Pipeline Management

Modern video pipelines are rarely linear. LakeFS allows teams to version-control every stage of the transformation:

- Raw Ingest: The initial “source of truth” branch.

- Preprocessing: A branch where frames are extracted or multimodal captions are added.

- Latent Generation: A branch for model-ready tensors.

By treating the training dataset as a Release Artifact, teams use lakeFS to “freeze” data and label it with a tag (e.g., video-train/v2026-Q1). This tag is stored alongside the model run ID, ensuring 100% reproducibility for audits or debugging.

2026 Data Versioning Comparison

| Feature | lakeFS | Git LFS | DVC (Standard) |

| Scaling Limit | Exabyte Scale | Small-to-Mid Repos | Terabyte Scale |

| Branching Speed | Instant (Zero-Copy) | Slow (File-based) | Metadata-based |

| Architecture | Metadata Overlay on S3 | Extension to Git | Code/Data coupling |

| Primary Use | Production Data Lakes | Assets in code repos | Individual ML projects |

“Moving Compute to Data” with lakeFS Mount

A major shift in 2026 is the lakeFS Mount capability. Rather than downloading massive datasets to a local NVMe, engineers “mount” a lakeFS repository as a virtual local directory.

- Prefetching & Caching: The mount utilizes advanced caching to speed up data retrieval, ensuring that training loops aren’t bottlenecked by S3 latency.

- Virtual Access: This allows deep learning frameworks (PyTorch, TensorFlow) to interact with remote petabyte-scale data as if it were on a local drive, dramatically reducing compute idle time and storage costs.

The Bottom Line: In 2026, lakeFS has become the “Git for Data,” allowing enterprise AI teams to manage multimodal assets with the same discipline and speed as their source code.

How Do You Orchestrate Pipelines on a 192GB VRAM Architecture?

In 2026, the model registry has evolved to manage “Compound AI Systems.” For generative video, a model version is no longer a single file but a coordinated collection of diffusion backbones, temporal modules, and text encoders. The 2026 stack prioritizes traceability and asset-centric orchestration to manage the high compute costs and complexity of these pipelines.

Model Registries: MLflow 3.x vs. W&B Weave

The leading registries have pivoted from simple experiment tracking to full GenAI Lifecycle Management.

MLflow 3.x: The “Logged Model” Paradigm

MLflow 3.x treats models as first-class entities.

- LoggedModels: This new object type captures metrics, parameters, and traces across all environments (Dev, Staging, Prod). In 2026, it natively supports “Compound Models,” allowing a single version tag to link a DiT (Diffusion Transformer) with its specific VAE and T5-XXL encoder.

- MLflow Assistant: An in-product chatbot (powered by Claude Code) helps engineers diagnose training failures or drift directly within the UI.

- Distributed Tracing: Now integrates with OpenTelemetry, allowing video generation traces to flow into enterprise tools like Datadog or Splunk for unified monitoring.

W&B Weave: The Agentic Trace Tree

Weights & Biases has transitioned into a “Developer-First Tracing” platform.

- Trace Trees: Using the @weave.op decorator, teams can visualize how a user prompt transforms into a video. It captures every step, from retrieval and latent denoising to final upscaling.

- Evaluations & Leaderboards: W&B Weave provides a rich UI for “LLM-as-a-Judge” and human-in-the-loop scoring. Teams use this to compare video fidelity scores (e.g., FVD or CLIP-score) side-by-side across different model iterations.

- The Feedback Loop: Weave captures user feedback (thumbs up/down) in production and links it back to the original training run, creating an automated “data flywheel” for model fine-tuning.

Orchestration: Dagster vs. Prefect

The orchestration of video pipelines—characterized by long-running GPU tasks—requires a shift from “tasks” to “assets.”

- Dagster (Software-Defined Assets): Dagster has emerged as the 2026 standard for engineering discipline. It focuses on the Asset Graph rather than a traditional DAG. If a video’s “low-res latent” asset is already materialized and the “upscaling” code changes, Dagster’s reconciliation logic re-runs only the upscaling step, saving thousands in compute costs.

- Prefect (Dynamic Workflows): Prefect remains the choice for teams requiring high flexibility. Its “Python-as-is” approach allows engineers to wrap any function with @flow without restructuring their code. It excels in real-time, event-driven video processing where tasks must scale dynamically across heterogeneous cloud providers.

2026 Orchestration Comparison

| Feature | Dagster | Prefect |

| Core Primitive | Software-Defined Assets | Task / Flow |

| Philosophy | Declarative (State-focused) | Imperative (Action-focused) |

| Observability | Visual Asset Lineage & Cost | Real-time Task Monitoring |

| Best For | High-fidelity Video Pipelines | General-purpose ML Automation |

| Scalability | Reconciliation-based (Re-use) | Dynamic Worker Polling |

Serverless Video Processing

For the final mile of delivery, tools like Nuclio and BentoML are frequently integrated. These platforms provide “Cold-Start Optimization,” ensuring that GPU-heavy video decoders can spin up in milliseconds to serve interactive requests without maintaining expensive, idle instances.

The 2026 Bottom Line: You no longer manage “models”; you manage “traces” and “assets.” The goal is not just to generate video, but to ensure that every frame has a verifiable lineage from the prompt to the final pixel.

How Do You Monitor Creative Drift in High-Fidelity Outputs?

The transition from text-only LLMs to multimodal video models has created a massive observability gap. In 2026, MLOps platforms have shifted toward Multi-Modal Observability, focusing on the high-dimensional latent space and “creative quality” metrics that traditional monitoring tools cannot capture.

Latent Space Monitoring: The New Frontier of Drift

Traditional monitoring detects drift in tabular features or model output probabilities. In generative video, drift occurs within the latent space—the compact, high-dimensional manifold where the model’s “understanding” of visual concepts resides.

- VSCOUT (VAE Self-Correcting Outlier Uncovering Technique): In early 2026, VSCOUT emerged as the standard for retrospective monitoring. It trains an Automatic Relevance Determination VAE (ARD-VAE) to learn the initial latent structure of training data.

- Ensemble-Based Detection: It uses an ensemble of filters to identify “special-cause” outliers or structural contamination within the latent subspace.

- Structural Drift: By identifying when a prompt’s latent representation falls outside the learned “In-Control” (IC) manifold, VSCOUT alerts teams to potential hallucinations or out-of-distribution (OOD) requests.

- Embedding Drift (Arize Phoenix & LangWatch): For systems using vector search to retrieve video frames, platforms now monitor Embedding Drift. If the distance between the “Live” embedding distribution and the “Training” distribution increases, it indicates that the model’s internal representation of concepts (like “photorealism”) is shifting.

Quantifying ‘Creative Quality’ in Pipelines

Monitoring “Creative Quality” is the primary challenge of video MLOps in 2026. This is addressed through a multi-dimensional framework—anchored by benchmarks like VBench and DynamicEval—evaluating video across six key axes:

| Quality Dimension | Description | Automated Metric / Tool |

| Aesthetic Quality | Visual appeal and artistic expressiveness. | CLIP Aesthetic Predictors / GMI Cloud Evaluators |

| Background Consistency | Stability of the scene during camera motion. | MS-Debias (Debiased Motion Smoothness) |

| Subject Consistency | Foreground objects maintain identity. | Track-FG (using point trackers like CoTracker) |

| Motion Smoothness | Lack of flickering or temporal artifacts. | VBench Motion Smoothness Score |

| Dynamic Degree | Intensity and naturalness of movement. | DynamicEval Benchmark |

| Semantic Alignment | Adherence to the prompt’s instructions. | VLM-as-a-Judge (e.g., Qwen2.5-VL) |

The Hybrid Evaluation Loop

Because automated tools still struggle with “narrative coherence,” 2026 pipelines employ a Hybrid Evaluation Loop:

- AI-Assisted Scoring: Models like DeepSeek-V3 analyze generated videos to assign initial scores across the six dimensions.

- RAG-Augmented Verification: A RAG system pulls reference frames from high-quality datasets to check if the generated video deviates from the “Gold Standard.”

- Human-in-the-Loop (HITL): Only videos with low confidence scores or high Semantic Entropy are escalated to human annotators for final Mean Opinion Score (MOS) validation.

The 2026 Bottom Line: Observability is no longer just about “is the model up?” but “is the model’s creativity drifting?” Trust in video models is built by monitoring the latent manifold where reality and simulation intersect.

Does Your Stack Meet the Mandatory C2PA Trust Requirements?

In an era of high-fidelity generative video, media traceability is no longer a luxury—it is an infrastructure requirement. In 2026, the C2PA (Coalition for Content Provenance and Authenticity) standard has matured into the bedrock of ethical AI, moving from theoretical open-source projects to native integration in silicon and enterprise workflows.

Implementation of C2PA Signing

By 2026, generative video startups and established media houses have moved beyond post-processing to native signing. This is primarily achieved through the C2PA Rust SDK (c2pa-rs), which provides the memory safety and concurrency required for high-throughput video pipelines.

- Thread-Safe Architecture: The SDK utilizes the Context API (leveraging Arc<Context>), allowing a single generation server to sign thousands of assets concurrently across multiple threads without memory leaks or global state conflicts.

- Format Versatility: Manifests are injected into JUMBF (JPEG Universal Metadata Box Format) boxes within MP4, AVIF, and JPEG containers, ensuring the provenance data is “carried” within the file itself.

- Enterprise Key Management: To prevent key leakage, 2026 pipelines utilize PKCS#11 to communicate with Hardware Security Modules (HSMs). This ensures that while the SDK performs the hashing and manifest construction, the actual Claim Signature is performed inside a secure, FIPS-validated hardware boundary.

Hard Binding vs. Soft Binding

The 2026 reality of social media “metadata stripping” has led to a dual-track strategy for provenance:

Hard Binding: The Integrity Anchor

Every C2PA manifest contains a Hard Binding—a cryptographic hash (SHA-256) of the asset’s bytes.

- Function: This acts as a digital seal. If a malicious actor alters even a single frame of a video, the hash no longer matches, and the C2PA validator will flag the content as “Tampered.”

- Weakness: It is fragile. Simple actions like re-encoding a video for a social media platform will break the hard binding, even if the content itself remains “truthful.”

Soft Binding: Durable Content Credentials

To survive the “black hole” of social media transcoding, 2026 stacks utilize Soft Binding (also known as Durable Content Credentials).

- Mechanism: The manifest data is paired with an Invisible Watermark (like SynthID) or a Perceptual Fingerprint.

- The Resolution API: If the embedded manifest is stripped during upload, a user’s browser or news client can detect the watermark and query a Manifest Repository via the Soft Binding Resolution API.

- Recovery: The service uses the watermark as a lookup key to retrieve the original signed manifest, re-attaching the provenance data to the “orphan” asset in real-time.

The 2026 Trust Hierarchy

| Layer | Technical Component | Purpose |

| Identity | X.509 / W3C Verifiable Credentials | Proves who or what signed the asset. |

| Binding | SHA-256 (Hard) + Watermark (Soft) | Connects the identity to the specific pixels. |

| Interoperability | C2PA Conformance Program | Ensures diverse tools (Pixel 10, Sony Cameras) speak the same “trust language.” |

By combining the cryptographic certainty of hard bindings with the resilience of soft bindings, the 2026 ecosystem ensures that even a screenshot of an AI-generated video can be traced back to its origin, making transparency a persistent property of digital media.

What are the Strategic Implications of This New MLOps Foundation?

This stack follows the “Data Gravity” principle—keeping high-performance compute physically and logically close to the data lake—while ensuring “Time-to-Trust” by embedding provenance directly into the production pipeline.

1. Compute & Silicon

- Frontier Training: 8x NVIDIA B200 (Blackwell) clusters. The B200’s 192GB of HBM3e VRAM is the minimum requirement for training modern video diffusion transformers (DiT) without excessive model sharding.

- Production Inference: NVIDIA L40S nodes. While the B200 handles the “heavy lifting,” the L40S provides the most cost-effective horizontal scaling for daily video generation and fine-tuning.

2. Data Logistics

- Version Control: lakeFS running on S3-compatible storage. Traditional Git LFS fails at petabyte scales; lakeFS provides Git-like branching and “zero-copy” snapshots, allowing GPUs to train on specific data versions without duplicating massive video files.

- Feature Store: Feast or Featureform for managing multi-modal embeddings and temporal metadata.

3. Model Governance & Tracking

- Registry: MLflow 3.x. The 2026 release of MLflow unifies tracking for “Compound AI Systems,” managing the backbone, temporal modules, and text encoders as a single versioned entity.

- Visual Evaluation: Weights & Biases (W&B Weave). Used for side-by-side video comparisons and human-in-the-loop (HITL) scoring. Weave’s “Trace Trees” are critical for debugging non-linear agentic workflows.

4. Serving & Orchestration

- Inference Server: NVIDIA Dynamo Triton. Optimized for high-throughput video via dynamic batching and TensorRT engines. It handles the complex “prefill” and “decode” phases of video generation with sub-millisecond overhead.

- Workflow Engine: Dagster. Preferred over Airflow for its “Software-Defined Asset” model, which allows teams to track the lineage of every video frame as it moves from latent space to final upscale.

5. Observability & Trust

- Latent Monitoring: Arize Phoenix. In 2026, observability has moved into the latent space. Phoenix detects “Embedding Drift,” alerting teams when a model’s creativity begins to deviate from its training distribution.

- Trace-Level Logging: LangWatch. Provides deep inspection of agentic “thought processes” and multi-step reasoning in complex video-editing agents.

- Provenance: c2pa-rs library. Every generated video is cryptographically signed at the point of exit using an HSM (Hardware Security Module) via PKCS#11, meeting the 2026 mandatory disclosure requirements of the EU AI Act.

The 2026 Operational Advantage

| Metric | Legacy Stack (2024) | Standard 2026 Startup Stack |

| Data Branching | Hours (Copying files) | Milliseconds (lakeFS Metadata) |

| Inference Efficiency | Static Batching | Dynamic Batching (Dynamo Triton) |

| Trust Status | “Trust Me” / Watermark | Cryptographically Signed (C2PA) |

| Evaluation | Manual Review | LLM-as-a-Judge + W&B Weave |

Conclusion: Strategic Implications for the Creative Enterprise

In 2026, generative video MLOps has reached industrial maturity. The focus is now on serving content at scale with predictable costs. Blackwell-class silicon provides the necessary hardware power, offering a 5x increase in inference throughput for massive models. However, the real success comes from the software layers that manage these systems.

To succeed, you must master several key technologies:

- Zero-Copy Data Versioning: Tools like lakeFS allow you to branch and version datasets like code. This speeds up experiments without the cost of duplicating data.

- Latent Space Monitoring: Implementing frameworks like Visual and Textual Intervention (VTI) helps reduce hallucinations. These tools monitor the model’s internal “thinking” space to ensure stable and realistic video.

- C2PA Authenticity: The C2PA standard provides the “nutrition label” for digital content. By August 2, 2026, meeting these transparency rules will be a legal requirement under the EU AI Act.

The 2026 MLOps stack is the engine for a new era of visual communication. By building a verifiable and robust foundation today, you can turn AI from a potential risk into a high-performance business asset.

Contact us for an agentic AI consultation to build your video MLOps strategy.

FAQs:

What are the best MLOps tools for image generation in 2026?

The MLOps stack described is optimized for generative video but utilizes industry-standard tools for GenAI lifecycle management, which are generally applicable to high-fidelity image generation. Key tools for the 2026 pipeline include:

- Model Registry/Tracking: MLflow 3.x for managing “Compound AI Systems” (e.g., linking a diffusion backbone with its encoder) and Weights & Biases (W&B Weave) for visualizing the generation process via “Trace Trees” and managing human-in-the-loop (HITL) evaluation.

- Orchestration: Dagster is preferred for its “Software-Defined Assets” model, ensuring reliable lineage and cost-saving reconciliation of materialized assets.

- Observability: Arize Phoenix is used for “Latent Monitoring,” specifically to detect “Embedding Drift” which signals that the model’s internal representation of concepts is shifting.

How do I manage multi-terabyte datasets for video model training?

The industry standard for managing petabyte-scale video datasets in 2026 is lakeFS, an open-source system that runs on object storage like AWS S3 or Azure Blob.

- Zero-Copy Branching: lakeFS is centered on this innovation, which allows researchers to create a new, isolated data branch for an experiment in milliseconds, regardless of the dataset size. No video files are duplicated, which dramatically reduces storage costs and setup time.

- Scalability: The system is designed for Exabyte Scale, far surpassing the limits of traditional version control like Git LFS.

- Data as a Release Artifact: Teams use lakeFS to “freeze” datasets with a version tag (e.g., video-train/v2026-Q1), ensuring 100% reproducibility for auditing and debugging model runs.

Is it cheaper to run video inference on-prem or in the cloud in 2026?

The document does not provide a direct comparison of on-premise versus cloud costs. However, the hardware selection strategy focuses on cost-effectiveness and ROI for different scales:

- Cost-Effective Choice: The NVIDIA L40S is cited as the ROI-optimal choice for visual AI applications and RAG workloads due to its 35% lower cost-per-token rate compared to high-end units, making it ideal for horizontal scaling of daily video generation.

- High-Throughput Serving: The NVIDIA Dynamo Triton inference server is the standard for high-throughput serving, using techniques like Dynamic Batching to maximize GPU saturation and minimize latency overhead.

How do you monitor ‘Creative Quality’ in an MLOps pipeline?

Monitoring has shifted from simple uptime checks to Multi-Modal Observability focused on the model’s internal “creative drift” within the high-dimensional latent space.

- Latent Space Monitoring: Tools like VSCOUT are used for retrospective monitoring. This technique trains a VAE to learn the “In-Control” (IC) manifold of the training data and alerts teams if a generated prompt’s latent representation falls outside this learned structure, indicating a potential hallucination or out-of-distribution (OOD) output.

- Quantified Framework: Creative quality is assessed across a multi-dimensional framework using benchmarks like VBench and DynamicEval on six key axes:

- Aesthetic Quality

- Background Consistency

- Subject Consistency

- Motion Smoothness

- Dynamic Degree

- Semantic Alignment (Adherence to the prompt)

- Hybrid Evaluation Loop: For critical or low-confidence outputs, a loop combines AI-Assisted Scoring (using VLM-as-a-Judge models), RAG-Augmented Verification (pulling reference frames), and Human-in-the-Loop (HITL) validation to ensure narrative coherence.

What is the standard 2026 stack for a generative AI startup?

The standard 2026 startup stack for generative video is built on five strategic pillars, prioritizing traceability and cost-effective scaling:

- Compute & Silicon: NVIDIA B200 (Blackwell architecture) for frontier model training and NVIDIA L40S for production inference.

- Data Logistics: lakeFS on S3-compatible storage for “zero-copy” data versioning.

- Model Governance & Tracking: MLflow 3.x for model registry and W&B Weave for visual evaluation and “Trace Trees.”

- Serving & Orchestration: NVIDIA Dynamo Triton for dynamic batching inference and Dagster for workflow management based on “Software-Defined Assets.”

- Observability & Trust: Arize Phoenix for latent drift monitoring and the c2pa-rs library for mandatory cryptographic signing (Provenance) via a Hardware Security Module (HSM).

You May Also Like

Solana Defends Meme Coin Surge as Network Stress Test

What Are Instagram Engagement Pools and How Do They Really Work?