Yield Energy Launches a First-of-Its-Kind Agriculture-Focused DERMS Solution to Transform On-Farm Operations into Grid Resources

Rebrand from Polaris Energy Services marks major milestone as Yield Energy activates 200+ MW of ag flexibility, including 100+ MW enrolled in PG&E’s agriculture-specific Hourly Flex Pricing pilot

SAN DIEGO, Jan. 20, 2026 /PRNewswire/ — Yield Energy, formerly Polaris Energy Services, today announced the launch of the Yield Edge DERMS, the first agriculture-first distributed energy resource management system designed to unlock grid-ready energy flexibility from farms. The launch coincides with the company’s rebrand to Yield Energy, reflecting its evolution into a hardware-agnostic energy platform built around a deep AgTech partner ecosystem and designed to deliver utility-grade grid services at scale.

With more than 200 MW of agricultural load under management, including over 100 MW enrolled in PG&E’s agriculture-specific Hourly Flex Pricing (HFP) pilot, Yield Energy is redefining how on-farm operations support the grid while generating new recurring revenue for growers.

Backed by nearly $3 million in funding from the California Energy Commission (CEC), Yield Energy has validated its platform through state-supported work, confirming that agricultural operations can deliver automated, reliable, utility-grade flexibility at scale. This validation enables utilities to confidently tap agricultural loads while ensuring growers benefit financially — without changing the way they farm.

The Yield Edge DERMS orchestrates primarily irrigation pumps and is expanding its capability to include all on-farm DER’s including cold storage, EV and equipment chargers, solar arrays, batteries, and on-site generation — transforming everyday farm assets into fast, clean, and dispatchable virtual power plants that respond to grid needs in real time.

“Agriculture has always had the potential to be one of the grid’s most powerful partners — it just needed the right tools,” said Tyler Nuss, CEO of Yield Energy. “We’ve proven that on-farm operations can deliver reliable, grid-ready flexibility at scale. Yield connects that flexibility to the operational demands of today’s grid, creating new revenue for growers while delivering capacity that’s faster, cleaner, and far more affordable than new infrastructure.”

Yield Energy integrates seamlessly with a broad ecosystem of AgTech partners — including WiseConn, Farmblox, LUMO, Ranch Systems, Swan Systems, Netafim, and Verdi — enabling pumps, sensors, and automation hardware to participate automatically in demand response and other grid programs, with fast response times and minimal disruption to farm operations.

This combination of state-validated technology and deep AgTech collaboration has already delivered strong performance across California, including:

- 100% average demand response performance across enrolled devices

- 67% demonstrated load-shift potential during peak hours

- $20,000+ in annual revenue per grower, on average

- More than 10,000 on-farm devices enabled on the platform

“Partnering with Yield Energy is transforming what’s possible for our growers,” said a WiseConn spokesperson. “With a single integration, our irrigation technology can now unlock new revenue, tap into more utility programs, and deliver measurable value back to farms — all while strengthening the grid.”

By aligning agricultural operations with utility needs, Yield Energy delivers a new category of clean, flexible capacity: large, responsive loads that can shift within minutes, support localized grid reliability, and do so at a dramatically lower cost than new storage or infrastructure upgrades — creating a scalable pathway for utilities to integrate on-farm resources into grid planning while strengthening the economic resilience of growers.

To learn more, go to yieldenergy.com

About Yield Energy

Yield Energy is the leading provider of energy flexibility technology for agriculture. Formerly Polaris Energy Services, Yield Energy connects on-farm energy resources to the grid, enabling growers to earn revenue while supporting grid reliability. With more than 200 MW of agricultural load under management, Yield Energy partners with utilities and AgTech innovators to deploy scalable, hardware-agnostic virtual power plant programs built for the future of the grid. Learn more at yieldenergy.com

Media Contact:

Alexandra Pony

407878@email4pr.com

250.858.0656

![]() View original content to download multimedia:https://www.prnewswire.com/news-releases/yield-energy-launches-a-first-of-its-kind-agriculture-focused-derms-solution-to-transform-on-farm-operations-into-grid-resources-302664764.html

View original content to download multimedia:https://www.prnewswire.com/news-releases/yield-energy-launches-a-first-of-its-kind-agriculture-focused-derms-solution-to-transform-on-farm-operations-into-grid-resources-302664764.html

SOURCE Yield Energy

You May Also Like

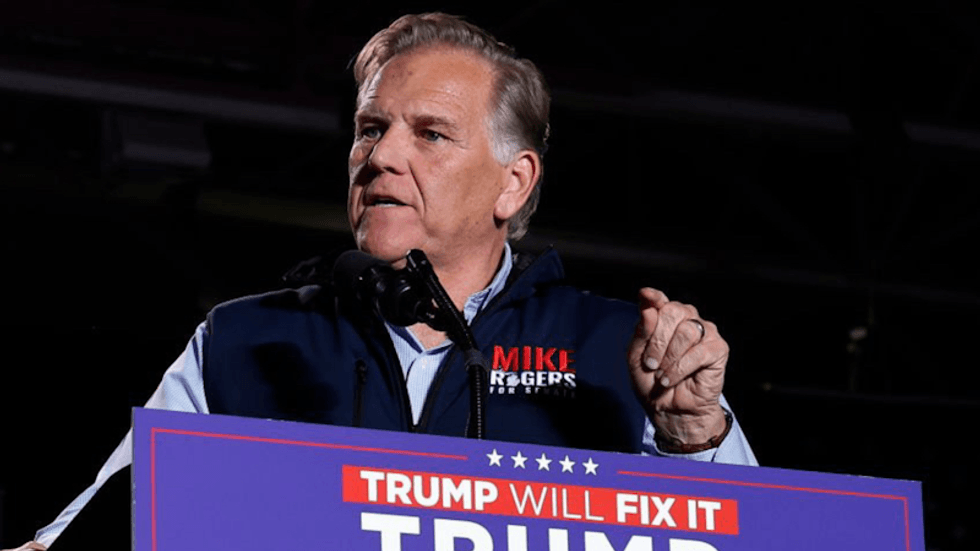

Republicans deploy ground troops in the states to execute 'the plan'

GOP Senate hopeful under fire over ties to church rocked by child sex abuse scandal