Like Paystack, African tech companies should have safe AI adoption policies

Artificial intelligence is rapidly reshaping the global technology sector. In recent months, major technology firms, including Amazon, Block, Oracle, and Meta, have accelerated investment in AI-driven systems while trimming parts of their workforce.

From AI coding assistants generating software to automated customer service systems and intelligent analytics platforms replacing manual data work, companies increasingly see automation as a route to efficiency.

The promise is clear: leaner operations, faster decision-making, and lower costs.

Yet this shift also raises a deeper question about the future of work and the role of human oversight in an automated economy. For Africa’s fast-growing technology ecosystem, the conversation goes beyond workforce changes. It centres on whether companies are adopting artificial intelligence safely and responsibly.

Across technology hubs in Lagos, Nairobi, and Cape Town, startups are embracing AI at a remarkable speed. Fintech platforms are among the most enthusiastic adopters, using machine learning systems to detect fraud, automate compliance checks and analyse customer behaviour across complex financial environments.

The advantages are difficult to ignore. AI can process huge volumes of transaction data in seconds, spotting suspicious patterns that traditional systems might miss. For fintech companies managing payments across borders and currencies, these capabilities can significantly improve reliability and security.

AI slowing replacing human workforce

AI slowing replacing human workforce

However, innovation is currently moving faster than regulation in many African markets. Policies governing the use of artificial intelligence are still emerging, and oversight frameworks remain fragmented. Without clear guidelines, companies risk deploying powerful systems without fully understanding their legal, ethical and operational consequences.

Algorithms that influence financial decisions without adequate oversight can introduce serious vulnerabilities. These include data privacy breaches, flawed risk assessments and automated actions that affect customers without a clear path for human review.

As adoption accelerates, governance is becoming just as important as innovation.

Paystack’s AI governance blueprint

One African fintech offering a practical model for responsible AI adoption is Paystack.

Rather than allowing rapid experimentation without guardrails, the company has developed a structured governance approach designed to manage risk while still encouraging innovation.

At the heart of this strategy is a simple philosophy. As the company states in its governance documentation, “AI should make financial systems safer, not riskier, and more dependable, not harder to trust.”

This mindset shapes how new AI tools are evaluated across the organisation.

Instead of allowing individual teams to experiment independently, Paystack maintains what it describes as “a central inventory of AI systems in use, including what they’re used for, the data they interact with, and the level of risk involved”.

This living registry gives the company visibility across departments and helps prevent unreviewed or duplicate tools from quietly entering internal workflows.

Every proposed AI tool must be documented before approval. Teams are required to outline the system’s purpose, ownership, cost, and the type of data it processes. They must also explain why that data can be used and identify potential risks.

According to the company, “Each proposal is reviewed centrally before approval, so every AI system in use has clear accountability and oversight from the start.”

Paystack

Paystack

This evaluation applies to everything from advanced fraud-detection systems to everyday productivity tools. For instance, Paystack uses AI assistants such as Gemini for internal productivity, while development teams rely on coding tools like Cursor, GitHub Copilot and Claude Code during software development.

However, these tools are introduced cautiously. The company emphasises that “their value doesn’t come from performance alone. It comes from how they’re governed.”

Security and privacy checks are therefore mandatory before any adoption decision. Teams must examine how the tool handles data, whether that information is used to train external models, and what safeguards exist to protect sensitive information.

Notably, Paystack places strict limits on the types of AI tools permitted in regulated contexts. Free AI tools are generally prohibited for high-risk or regulated use cases, a precaution designed to avoid unencrypted data sharing or uncontrolled model training.

Certain applications also receive additional scrutiny. Systems used for fraud detection, automation and agentic coding are reviewed more closely because they can directly affect customers, merchants or the reliability of financial infrastructure.

As the company explains, “Use cases like fraud detection, automation, and agentic coding receive deeper review because they can affect customers, merchants, or the reliability of our systems.”

Human oversight remains a central safeguard within this framework. Paystack emphasises that “AI tools are treated as assistants, not decision-makers, and teams remain responsible for validating outputs.” For example, when automated monitoring systems detect suspicious transactions, human analysts review the flagged activity before any account restrictions are applied.

A wake-up call for African tech founders

The rapid adoption of artificial intelligence offers African startups a powerful opportunity to compete globally. However, speed alone is not enough.

Artificial Intelligence (AI) in Africa

Artificial Intelligence (AI) in Africa

Replacing large parts of operational workflows with automated systems may improve efficiency on paper. Without strong governance, however, companies risk exposing sensitive customer data, introducing algorithmic bias and undermining user trust.

The Paystack model highlights a different approach. By embedding accountability, human oversight and regulatory awareness into its AI strategy, the company demonstrates that innovation and responsibility do not have to compete.

As artificial intelligence becomes increasingly woven into Africa’s digital economy, the conversation must move beyond mere adoption to adopting transparent, human-centred AI policies.

Also read: Amazon to lay off another 14,000 employees in Q2 amid aggressive AI transition

The post Like Paystack, African tech companies should have safe AI adoption policies first appeared on Technext.

You May Also Like

Proxy Network Crushed: 369,000 Hacked Routers Taken Offline in Crypto Fraud Bust

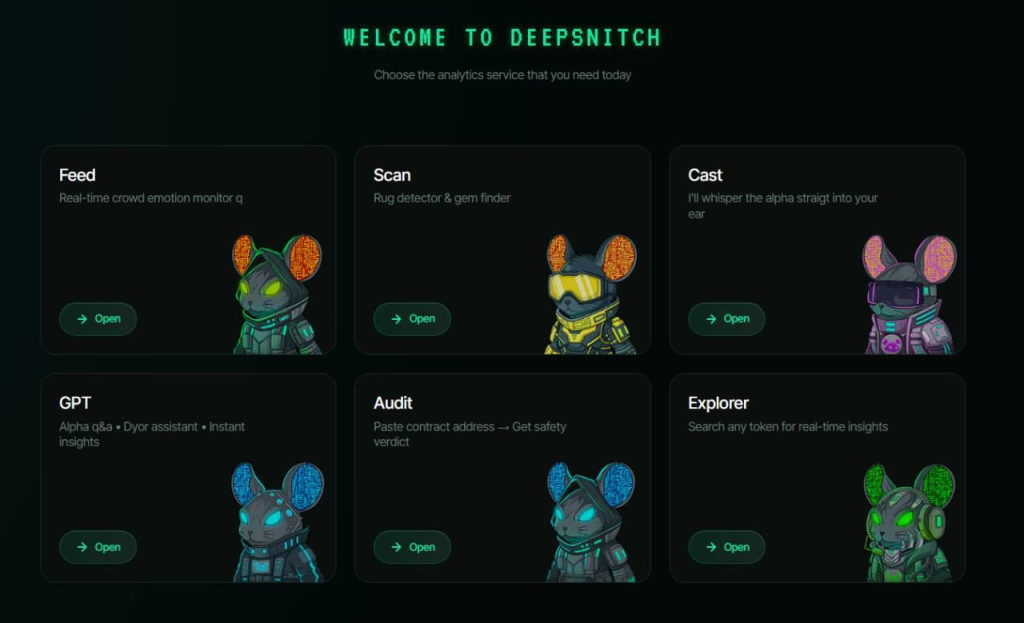

DeepSnitch AI Launch Date 2026: Ethereum Mandates Core Pillars While Bitcoin and NEAR Falter Against a 100x DSNT Lifeline