SOL ETF Approval Looms Amid Record Inflows Into Solana Products

TLDR

- An expert has predicted that the SEC could approve the pending SOL ETF filings later this week.

- Solana ETPs have reached a record $5.1 billion in total assets under management.

- Recent amendments to ETF filings now include staking features to enhance fund rewards.

- Major issuers, such as Grayscale, Bitwise, and Canary, are actively pursuing approval for SOL ETFs.

- A public letter from issuers urges the SEC to allow liquid staking tokens in ETF structures.

A leading analyst expects the SEC to approve SOL ETF filings within days, as Solana ETPs post record-breaking inflows. Bitwise, Grayscale, and others have amended filings to include staking, signaling strong issuer confidence. Meanwhile, total assets in Solana-based exchange products have surpassed $5.1 billion, more than doubling previous records.

SOL ETF Issuers Increase Pressure with Staking Proposals

Grayscale, VanEck, 21Shares, Bitwise, and Canary are all pursuing approval for an SOL ETF in the United States. Recently, Bitwise, Grayscale, and Canary updated their S-1 forms to include staking mechanisms for earning native rewards. These amendments highlight growing issuer interest in turning SOL ETF products into income-generating vehicles.

https://x.com/TheCryptoLark/status/1975147566351237315

Furthermore, these firms sent a public letter to the SEC requesting approval for liquid staking tokens (LSTs) within ETF structures. They argued that LSTs could increase efficiency and turn a SOL ETF into a prototype for tokenized finance. “Integrating staking aligns with Solana’s native design and strengthens investor returns,” the letter emphasized.

This change may reshape ETF operations, allowing the use of proof-of-stake rewards to enhance fund value. With strong support from top issuers, the SEC faces mounting pressure to finalize its stance. If approved, it would be the first U.S.-based crypto ETF with direct staking features.

Solana ETPs Hit $5.1B AUM Amid Surging Interest

CoinShares confirmed that Solana investment products attracted $706 million in inflows during the latest reporting week. This pushed the total assets under management for all Solana ETPs to a new record of $5.1 billion. Previously, the all-time high stood at just $311 million in July.

These figures reflect a sharp increase in institutional interest despite partial government shutdowns and regulatory delays. According to CoinShares, “This week’s Solana inflow is the highest on record for any altcoin-based ETP.” This surge indicates that investors view the SOL ETF as a legitimate and scalable investment vehicle.

The only existing SOL ETF in the U.S. market is the REX Shares Solana Staking ETF (SSK), holding over $406 million in assets. Its continued growth further reflects Wall Street’s appetite for Solana exposure. This momentum adds weight to predictions of imminent SEC approval.

Jupiter ETP Launch and Futures ETFs Add Momentum

21Shares recently launched the Jupiter ETP (AJUP) on the SIX Swiss Exchange, increasing global access to the Solana ecosystem. This new ETP provides direct exposure to Jupiter, Solana’s leading decentralized liquidity platform. The hub currently handles over 90% of Solana transactions and $8 billion in weekly trading volume.

Cumulative trade volume on Jupiter has now crossed $1 trillion, demonstrating the network’s expanding reach and influence. Institutional investors are using this platform to access deeper liquidity and price discovery. The AJUP product represents the ecosystem’s growing legitimacy in traditional markets.

Meanwhile, futures-based SOL ETF products have surpassed $1 billion in total inflows across all issuers. These futures instruments, despite regulatory hurdles, show sustained demand among professional investors.

The post SOL ETF Approval Looms Amid Record Inflows Into Solana Products appeared first on CoinCentral.

You May Also Like

The Stunning ASEAN Winner Emerges As Manufacturing Shifts Accelerate

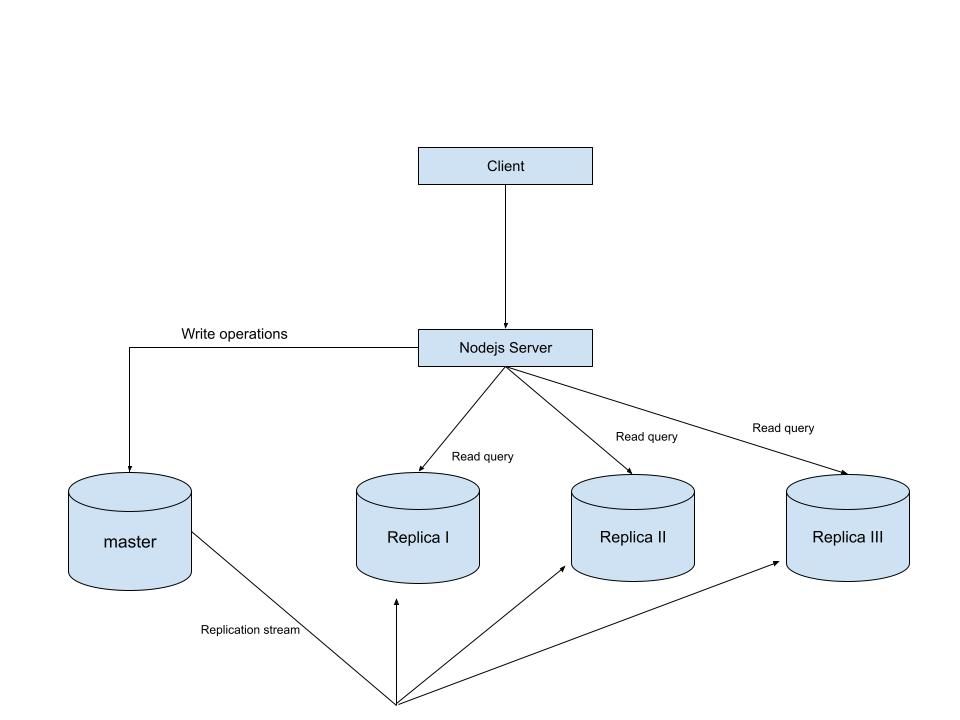

MySQL Single Leader Replication with Node.js and Docker

command: --server-id=1 --log-bin=ON The --server-id option gives each MySQL server in your replication setup its own name tag. Each one has to be unique and without it, replication won’t work at all. Another cool option not included here is binlog_format=ROW. This tells MySQL how to keep track of changes before passing them along to the replicas. By default, MySQL already uses row-based replication, but you can explicitly set it to ROW to be sure or switch it to STATEMENT if you’d rather log the actual SQL statements instead of row-by-row changes. \ Run our containers on docker Now, in the terminal, we can run the following command to spin up our database containers: docker-compose up -d \ Setting Up Our Master (Primary) Server To configure our master server, we would have to first access the running instance on docker using the following command docker exec -it mysql-master bash This command opens an interactive Bash shell inside the running Docker container named mysql-master, allowing us to run commands directly inside that container. \ Now that we’re inside the container, we can access the MySQL server and start running commands. type: mysql -uroot -p This will log you into MySQL as the root user. You’ll be prompted to enter the password you set in your docker-compose.yml file. \ Next, we need to create a special user that our replicas will use to connect to the master server and pull data. Inside the MySQL prompt, run the following commands: \ CREATE USER 'repl_user'@'%' IDENTIFIED BY 'replication_pass'; GRANT REPLICATION SLAVE ON . TO 'repl_user'@'%'; FLUSH PRIVILEGES; Here’s what’s happening: CREATE USER makes a new MySQL user called repl_user with the password replication_pass. GRANT REPLICATION SLAVE gives this user permission to act as a replication client. FLUSH PRIVILEGES tells MySQL to reload the user permissions so they take effect immediately. \ Time to Configure the Replica (Secondary) Servers a. First, let’s access the replica containers the same way we did with the master. Run this command in your terminal for each of the replica containers: \ docker exec -it <replica_container_name> bash mysql -uroot -p <replica_container_name> should be replace with the name of the replica container you are trying to setup b. Now it’s time to tell our replica where to get its data from. While inside the replica’s MySQL shell, run the following command to configure replication using the master’s details: CHANGE REPLICATION SOURCE TO SOURCE_HOST='mysql-master', SOURCE_USER='repl_user', SOURCE_PASSWORD='replication_pass', GET_SOURCE_PUBLIC_KEY=1; With the replication settings in place, let’s fire up the replica and get it syncing with the master. Still inside the MySQL shell on the replica, run: START REPLICA; This starts the replication process. To make sure everything is working, check the replica’s status with:

SHOW REPLICA STATUS\G; Look for Replica_IO_Running and Replica_SQL_Running — if both say Yes, congratulations! 🎉 Your replica is now successfully connected to the master and replicating data in real time.

Testing Our Replication Setup from the Node.js App Now that our replication is successfully set up, we can configure our Node.js server to observe the real-time effect of data being replicated from the master server to the replica server whenever we write to it. We start by installing the following dependencies:

npm i express mysql2 sequelize \ Now create a folder called src in the root directory and add the following files inside that folder connection.js, index.js and model.js. Our current directory should look like this We can now set up our connections to our master and replica server in the connection.js file as shown below

const Sequelize = require("sequelize"); const sequelize = new Sequelize({ dialect: "mysql", replication: { write: { host: "127.0.0.1", username: "root", password: "master", database: "replicaDb", }, read: [ { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3307 }, { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3308 }, { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3309 }, ], }, }); async function connectdb() { try { await sequelize.authenticate(); } catch (error) { console.error("❌ unable to connect to the follower database", error); } } connectdb(); module.exports = { sequelize, }; \ We can now create a User table in the model.js file

const {DataTypes} = require("sequelize"); const { sequelize } = require("./connection"); const User = sequelize.define("User", { name: { type: DataTypes.STRING, allowNull: false, }, email: { type: DataTypes.STRING, unique: true, allowNull: false, }, }); module.exports = User \ and finally in our index.js file we can start our server and listen for connections on port 3000. from the code sample below, all inserts or updates will be routed by sequelize to the master server. while all read queries will be routed to the read replicas.

const express = require("express"); const { sequelize } = require("./connection"); const User = require("./model"); const app = express(); app.use(express.json()); async function main() { await sequelize.sync({ alter: true }); app.get("/", (req, res) => { res.status(200).json({ message: "first step to setting server up", }); }); app.post("/user", async (req, res) => { const { email, name } = req.body; let newUser = await User.build({ name, email, }); // This INSERT will go to the write (master) connection newUser = newUser.save({ returning: false }); res.status(201).json({ message: "User successfully created", }); }); app.get("/user", async (req, res) => { // This SELECT query will go to one of the read replicas const users = await User.findAll(); res.status(200).json(users); }); app.listen(3000, () => { console.log("server has connected"); }); } main(); When you make a POST request to the /users endpoint, take a moment to check both the master and replica servers to observe how data is replicated in real time. Right now, we are relying on Sequelize to automatically route requests, which works for development but isn’t robust enough for a production environment. In particular, if the master node goes down, Sequelize cannot automatically redirect requests to a newly elected leader. In the next part of this series, we’ll explore strategies to handle these challenges