Because of deepfakes, digital evidence authentication will never be the same again.

getty

Federal prosecutors indicted an innocent person on fabricated audiovisual evidence last winter. Nobody challenged the file as a deepfake. The fake only came out when the confidential informant who produced it pleaded guilty to obstruction of justice at his own sentencing hearing.

The case appears in the first federal survey of judges on how courts handle deepfake challenges, released March 25, 2026 by the Federal Judicial Center. Of the 931 federal judges and magistrates who responded, only 15 had ever fielded a challenge to audiovisual evidence as a deepfake. The response rate was 45 percent. Two-thirds of those 15 had seen just one such challenge across calendar years 2024 and 2025. Most cases were civil.

Late last year, federal and state judges told reporters they were not ready for AI-generated evidence in court. The new survey shows what “ready” looks like from the bench. The Texas indictment shows why the count of past challenges does not measure the size of the problem.

The Federal Judicial Center pulled four of the challenged cases from PACER. One was tossed on a procedural deadline before the court reached the merits. Another turned out to involve a zoomed-in clip of evidence already admitted. That is not what the Advisory Committee on Evidence Rules is trying to address.

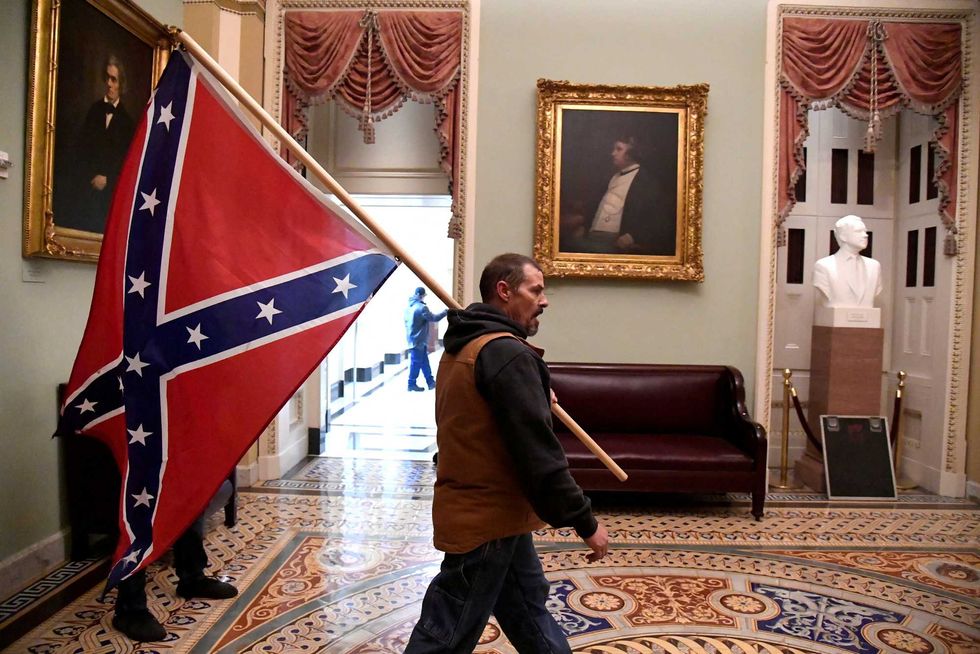

In the Western District of Texas case, a confidential informant produced fabricated audiovisual material to federal prosecutors. That material supported an indictment against a person whose case the government later dismissed without explanation. The fabrication only came out when the informant pleaded guilty to obstruction of justice at his own sentencing hearing.

The most damaging deepfake in the survey reached federal court through a guilty plea. No one in the original prosecution challenged the audiovisual material as fabricated. Counsel for the dismissed defendant did not have the file in hand to raise a deepfake objection at the time it mattered.

That is the canary in the 2 percent statistic. The figure counts deepfake challenges raised in federal court. It does not count fabrications that worked. Some of the most damaging fakes get through because nobody on the defense side knew to challenge them.

The Bar Federal Judges Just Set

Of the 914 judges who had not encountered a deepfake challenge, 745 said they would demand an initial showing before inquiring into authenticity. That is 82 percent. The 15 judges with first-hand experience were closer to evenly split.

When pressed on what the showing should look like, 679 judges wrote in answers. The largest group, 42 percent, would accept minimal evidence: an affidavit, technical work pointing to inauthenticity, or comparable corroboration. Another 35 percent would settle for a reasonable argument, a good-faith basis for asserting falsification beyond speculation.

Smaller shares would demand qualified expert testimony, sworn statements, or stronger authentication from the offering party. A handful would let it turn on the facts of the case.

Proposed Rule 901(c) would build on the existing framework of Federal Rule of Evidence 901. The new wording would require a challenger to put forward evidence sufficient to support a finding of fabrication before the court probes further. A bare assertion would not clear the bar. Federal judges, by and large, arrived at that position without waiting for the rule.

What Actually Clears The Bar

The judges sorted the kinds of attacks they would credit along two axes.

The first is the level of showing, the proof the challenger has to bring. That can be an affidavit, a technical analysis, or a corroborating fact.

The second is the nature of the attack. A direct attack points to something in the file itself, a glitch in the video or a feature that does not match. An indirect attack points outside the file, an alibi where the depicted location, person, or event can be falsified by external fact.

Both axes line up with digital forensic work. Reading a file for direct tells is one job. Reading the device that supposedly produced or stored the file is a separate one. It pulls in different records: timestamps that conflict with the claimed chain of custody, or files that were never present on the device that supposedly created them.

Generative AI detection tools alone will struggle here. Detection scores a file. It does not authenticate the file. A probability score from a detection model can support an initial showing. It cannot finish the job. I have written before about why detection tools alone cannot combat fakes, even if they are a critical necessity to combat the deepfake fraud epedimic. The proof that survives a federal trial runs through the file, the device, and the records that surround the device. Getting that proof requires digital forensic expertise.

Two Sides Of The Same Rule

The new bar reads two ways. Here is what each side gets.

For litigants raising a deepfake challenge, the survey is a road map: bring an affidavit, technical analysis, a specific anomaly in the file, or an external fact the file cannot account for. Bring all of them where they apply.

For the offering side, the same survey points at what is coming. Authentic files will still draw challenges. Most will fail under the bar judges describe. The ones that succeed will force authentication work the proponent has to budget for. I wrote in April about how that authentication cost is already being used as a settlement lever. The survey shows who will be doing more of the paying.

The 2 percent statistic counts what reached a federal courtroom as a contested deepfake. The fabrication that indicted an innocent person in Texas never reached one. The only reason anybody learned about it was the informant’s guilty plea. Until the federal rules adopt a written threshold, the deepfakes that land hardest in federal court may keep being the ones nobody objected to.

Source: https://www.forbes.com/sites/larsdaniel/2026/05/14/federal-prosecutors-indicted-an-innocent-person-on-a-deepfake/