Tangem v5.27: Your Gateway to Safer DeFi

Tangem’s wallet update 5.27 brings a meaningful step forward for users who want to interact with decentralized apps safely and without friction. This release focuses on a redesigned WalletConnect experience, deeper transaction verification, and added token support for Stellar (XLM) and XRP Ledger (XRP). Below you’ll find a clear, practical, and detailed breakdown of the new features plus step-by-step instructions to use WalletConnect with Tangem.

What’s new at a glance

-

Redesigned WalletConnect interface for a simpler, more consistent connection across Solana, Ethereum and EVM chains.

-

Integrated scam detection and dApp vetting powered by Blockaid.

-

Transaction simulation to “dry-run” transactions off-chain and generate a human readable preview.

-

Transaction verification (VTX): transaction + simulation + validation report bundled and cryptographically signed by a verification provider.

-

Improved handling of blind signing by showing precise, verifiable effects before you sign.

-

Know Your dApps (KYDA): automatic checks against malicious or phishing domains and smart-contract analysis.

-

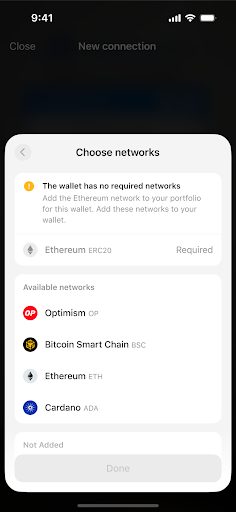

Better network detection and support for multi-network sessions within a single WalletConnect connection.

-

Native token support for Stellar (XLM) and for tokens on the XRP Ledger.

Why these updates matter

Interacting with dApps exposes users to two main risks: connecting to fake or malicious sites, and signing transactions without fully understanding what they do. Tangem 5.27 attacks both problems at the UI and protocol level. The result is a flow that keeps your private keys on the hardware device while giving you human readable verification and automated risk signals before you ever approve a signature.

WalletConnect on Tangem: step-by-step guide

Preparation

-

Update the Tangem app to version 5.27.

-

Make sure the blockchain networks you need are added to your wallet (for example Ethereum, Solana, BNB, Polygon, etc.). You can add networks from the app settings if needed.

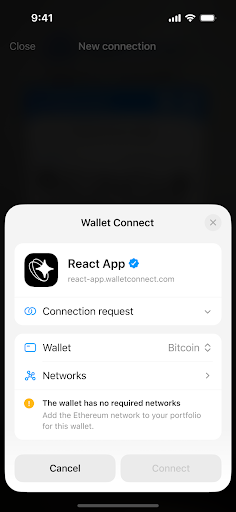

Connect to a dApp (standard flow)

-

On the dApp website, choose Connect Wallet and select WalletConnect.

-

In the Tangem app, open Settings > WalletConnect > + to enable the QR scanner.

-

Scan the QR code shown on the dApp, or tap a deep link if you are using mobile.

-

Tangem sends the dApp’s URL and the smart contract addresses to Blockaid for vetting.

-

You receive a connection request in the Tangem app. Review the dApp identity and any security flags. If Blockaid approves, you will see a verification checkmark. If Blockaid flags the dApp as high risk or suspicious, you will see a warning and must explicitly acknowledge the risk to continue.

-

Approve the connection in the Tangem app. Then tap your Tangem card or ring to confirm the signature operations that follow.

-

After finishing, go to Tangem’s WalletConnect menu to view and disconnect active sessions. Always disconnect when you are done.

Notes: after updating to v5.27 you do not need to reconnect or reauthorize previously connected dApps. Active sessions should continue working without extra steps.

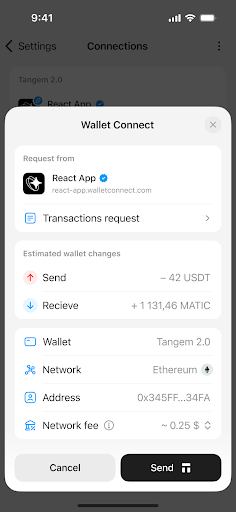

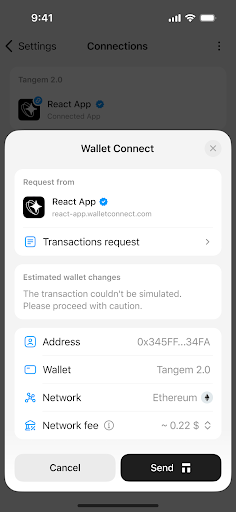

Transaction simulation and blind signing protection

One of the most impactful changes is the transaction simulation layer. Here is how it works in practice:

-

Before you sign, the wallet runs an off-chain simulation of your intended transaction.

-

The simulation computes exact balance changes, contract calls, token transfers, and potential errors.

-

Tangem shows a human readable preview so you can see precisely what will happen on-chain: tokens moved, recipients, and contract effects.

This approach reduces blind signing risk by giving you a readable representation of what you are approving. If something looks off, you can cancel before any signature is produced.

Transaction verification (VTX) explained

Tangem uses a mechanism called VTX to guarantee integrity between what you review and what is submitted on-chain:

-

A verification provider such as Blockaid bundles three items into a single payload: the raw transaction, the simulation output, and a validation report.

-

This payload is cryptographically signed by the verification provider. You review and sign the VTX instead of the raw transaction.

-

Because the VTX is signed, Tangem can verify that the simulation you saw is exactly the transaction that will be executed. This prevents tampering between the preview stage and submission.

Know Your dApps (KYDA) and Blockaid security checks

When you attempt a WalletConnect session, Tangem reaches out to Blockaid. Blockaid runs multiple checks in under a second:

-

URL and domain checks against an up-to-date repository of phishing and scam domains.

-

Static code scans that search contract bytecode for common scam patterns, hidden admin functions, or rug-pull hooks.

-

ABI and function inspection to flag risky functions, such as unauthorized minting or owner-only withdrawal methods.

-

Behavioral analysis and a risk scoring engine. High risk scores trigger extra warnings or a double-confirmation flow.

If a dApp is blacklisted, Tangem will block it. If a dApp is low reputation or newly created, Tangem will show a security alert. You may override some warnings, but only after explicit acknowledgement of the risk.

Network detection and multi-network sessions

Tangem now automatically detects whether the network the dApp is requesting is supported in your wallet. You can also allow a single WalletConnect session to interact with multiple networks, for example a dApp that uses both Ethereum and a Layer 2. The app will surface which networks are in use and any network mismatches before you sign.

Expanded token support: Stellar and XRP Ledger

Version 5.27 adds native support for tokens on Stellar (XLM) and for tokens on the XRP Ledger. You can now:

-

Store, send, and receive Stellar-based tokens directly in Tangem.

-

Manage XRP Ledger tokens from within the same Tangem interface.

This widens the range of assets you can securely hold on your Tangem card or ring.

Best practices when using WalletConnect with Tangem

-

Always verify the dApp domain and prefer bookmarked or official links.

-

Treat Tangem warnings and double-confirmation prompts as red flags. If in doubt, cancel and research the dApp.

-

Keep the Tangem app updated so you receive the latest blacklist data and security logic.

-

Use the WalletConnect menu to disconnect sessions when you are finished.

-

Revoke unused token allowances periodically using services like revoke.cash or a reputable block explorer.

-

Never approve transactions that promise unrealistic returns, instant giveaways, or require unexpected approvals.

Final notes

Tangem v5.27 blends hardware-backed signing with real-time dApp verification. The transaction simulation and VTX model give you a human readable, cryptographically verified way to see what a transaction will do before you sign. The KYDA checks and Blockaid integration reduce the chance of connecting to fraudulent sites. And with native support for Stellar and XRP Ledger tokens, Tangem becomes a more complete wallet for everyday crypto management.

This article was originally published as Tangem v5.27: Your Gateway to Safer DeFi on Crypto Breaking News – your trusted source for crypto news, Bitcoin news, and blockchain updates.

You May Also Like

Q2 Market Insights: Bitcoin regains dominance in risk-averse environment, ETFs remain critical to market structure

Bitcoin 28% Haircut: Moody’s Sets Forced-Selling Trigger